The Opportunity We’re Missing in The AI Dread Era

You don’t need us to tell you that AI has people on edge, worried about their jobs and livelihoods, markets and institutions, and the direction of the country and the world.

And when you scan the headlines, it’s hard to blame them. AI is moving quickly—faster than most people’s ability to fully understand it—and it already appears to be reshaping how work gets done. As one software marketer recently told the Wall Street Journal, “There’s almost nobody who is feeling positive vibes about their job right now. We’re in the AI dread era.”

In this dread era, conversations tend to fall into three broad categories:

A new AI model has arrived.

Last week’s breakthrough is now obsolete.

AI is actively eliminating knowledge worker jobs.

And yours is next.

AI is on a collision course with the global financial system.

You are not prepared.

While some of this may ultimately prove true, it is not the full story.

This piece—which came out of a conversation between our CEO, Jana De Anda, and Kristi McFarland, Chief People and Marketing Officer for Imperative Logistics—offers a more hopeful, and we believe more accurate, perspective.

This is not to refute the risks or dismiss the disruption, but to balance the prevailing narrative and introduce a different frame. Framing matters, especially when decisions are being made quickly, under pressure, and at scale.

Here is what we believe:

The speed of AI advancement is prompting people and organizations to move—or freeze. Decisions are being made without sufficient analysis.

Much of today’s anxiety is not entirely about AI.

It reflects broader, long‑standing economic and social fears.

Despite increasingly dire predictions, we have not lost.

In fact, most of us haven’t seriously started playing yet.

We have a rare opportunity to redefine what value means in our generation.

To realize it, we must engage actively and get comfortable with change.

Speed, Fear, and the Drift Toward Passivity

Speed and fear can conspire to make people feel powerless, and when people feel powerless they tend to sideline themselves—avoiding the conversations, decisions, and experiments that will shape their future.

We are watching this dynamic play out every day: Individuals and organizations vacillate between avoidance and dread, overwhelmed by the sheer pace of change and unclear about where, or how, to begin.

“Moving thoughtfully, with intention and clarity, is not hesitation but rather optimistic caution and informed advancement. It is what co-evolution looks like in practice.”

We are not arguing that the answer is to slow down. That would be about as productive as shouting at the rain. AI will continue to advance whether we are ready or not.

But we do believe there is a difference between moving quickly and moving blindly.

At Excelerate, our tagline—Go Faster. Smarter.—speaks to this tension directly. Businesses must operate at an ever‑accelerating pace but speed without grounding rarely produces durable outcomes.

Moving thoughtfully, with intention and clarity, is not hesitation but rather optimistic caution and informed advancement. It is what co‑evolution looks like in practice.

When organizations take a beat, when they pause just long enough to examine assumptions, many of today’s dominant narratives begin to weaken.

The Employment Apocalypse Narrative Isn’t Holding Up

Consider the certainty with which some commentators describe an impending white‑collar employment collapse. When examined closely, the evidence is notably less dramatic.

A recently published MIT study suggests that AI‑driven labor change is more likely to unfold broadly and gradually rather than through sudden, sector‑specific job wipeouts. In other words, work is expected to change, not collapse.

This distinction matters. A crashing wave is a disaster. A rising tide, while disruptive, has the potential to lift many boats.

The same is true of panic surrounding recent college graduates. It is undeniably a difficult labor market for young people. But that difficulty, as Rogé Karma observed in The Atlantic, cuts across education levels and is not confined to recent college graduates.

This is less about AI abruptly choking off white‑collar opportunities and more about a broader economic slowdown

The opacity of today’s labor market should make us more cautious about sweeping claims. When no one can say with confidence what is happening right now, certainty about the future should be met with skepticism.

But that skepticism is often absent. History suggests that transformative periods are poorly understood while they are happening. It’s why we have historians, because significance arrives later with distance.

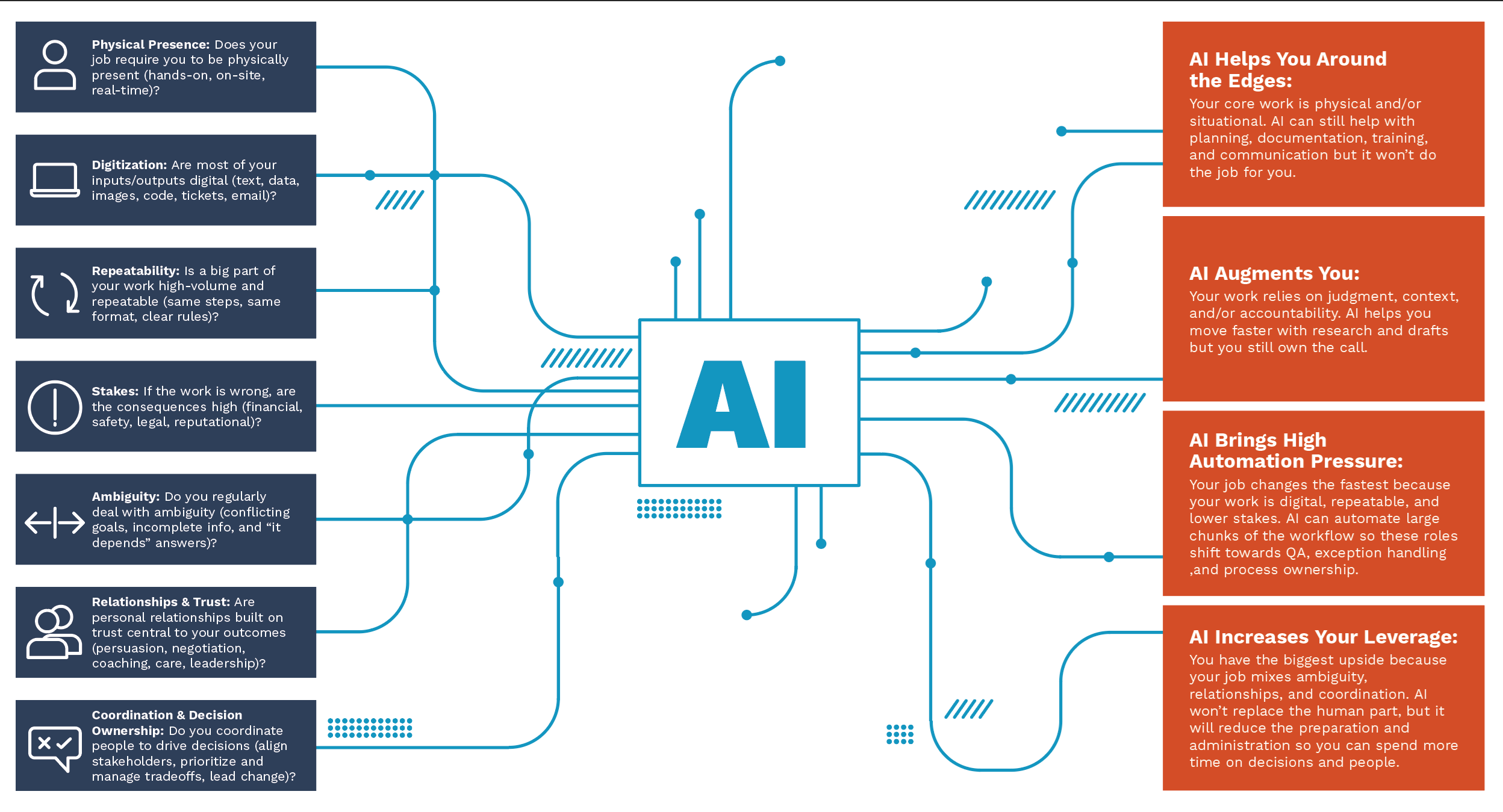

Every week brings a new list of “AI‑vulnerable” roles, presented as a countdown clock. While it would be irresponsible to ignore the real changes coming to many jobs, framing those changes exclusively as losses is unnecessarily narrow. One could just as easily ask a different question: Which roles, and which dimensions of roles, stand to benefit most from AI?

Below, we asked that very question.

What roles and attributes benefit the most from AI?

Why the COVID Analogy Cuts Both Ways

COVID is frequently invoked as a warning: this is March 2020 again, and we are underestimating the storm.

Perhaps. But there is another COVID analogy worth considering.

If, in the summer of 2020, someone told you that the pandemic—despite all its disruptions, emotional toil, and bleak economic forecasts—would usher in a period of economic expansion, reshaping how and where people work, you would have dismissed the idea outright. The visible signals all pointed in the opposite direction. How could markets thrive when people couldn’t even enter the supermarket?

Yet that is precisely what happened.

The point is not that AI disruption will be painless. Transition periods are often brutal, and this one will leave some people behind.

People we know, work with, and care about will experience real hardship. That has always been true of economic transitions.

But stagnation carries its own costs. Economies are dynamic systems where change is difficult but immobility is fatal.

As one Forbes contributor recently observed, AI is already causing economic disruption and suffering, but its end‑state effects are likely to be more positive than current narratives assume. AI is not simply replacing workers. It is changing the economics of work itself, an important distinction for long‑term outcomes.

What We’re Actually Afraid Of (And Why AI Gets the Blame)

When we slow down further, another pattern becomes visible: many of the conversations we are having about AI are not entirely about AI at all.

Borrowing from Charlie Brooker, AI increasingly functions as a kind of black mirror—reflecting our present realities and potential futures back at us. Many people do not like what they see.

In the United States, especially, AI anxiety sits atop a foundation of broader uncertainty.

It may be impolitic to discuss politics in a business blog, but when a Stanford study shows dramatically higher optimism about AI in China than in the U.S (80% favorability versus 39%), it is worth asking why.

The American business environment of recent years has been marked by sustained inconsistency with economic, political, and cultural instability layered on top one another.

“In the United States, especially, AI anxiety sits atop a foundation of broader uncertainty.”

Uncertainty discourages investment in the future and hiring young talent is fundamentally a long‑term bet. When the future feels opaque, organizations stop making that bet.

Beyond cyclical uncertainty, there is a quieter anxiety at play: concern about America’s position in the global order.

For decades, the U.S. has occupied a dominant economic and cultural position. AI introduces the possibility—at least in perception—of leveling parts of that playing field. While few would openly argue for permanent advantage, unease emerges when advantage appears vulnerable.

This may help explain why AI optimism is also much more pronounced in parts of the developing world where there is less privilege to fear losing. In those contexts, AI represents optionality rather than erosion.

Efficiency, Layoffs, and a Convenient Scapegoat

It is also impossible to talk about AI anxiety without talking about the economy we already had before AI arrived.

Well before generative models entered boardroom conversations, late‑stage consumer capitalism was grappling with its own structural contradictions. AI simply accelerated the reckoning.

Some economists have described AI investment as an “implicit short” against the consumer economy: if margins expand at the expense of human labor, aggregate demand eventually suffers. Many people intuit this tension even if they do not articulate it explicitly.

At the same time, corporate leaders increasingly emphasize efficiency and shareholder value. According to The Wall Street Journal, CFOs referenced efficiency on earnings calls more frequently in recent quarters than at any point since 2020.

In that environment, AI has become a convenient patsy.

Many layoffs attributed to AI replacing human workers are more accurately explained by post‑pandemic over hiring and subsequent rebalancing. AI provides political cover, simplifies the narrative, and often rewards shareholders.

Consider Block, where earlier this year Jack Dorsey announced layoffs tied to AI‑enabled organizational changes. The market responded positively. Yet Block had nearly quadrupled its headcount during the pandemic. Framed this way, the reduction was less about AI and more about right‑sizing.

Amazon’s recent workforce reductions follow a similar pattern. AI may be part of the operational story but it is rarely the full explanation.

Again, these distinctions matter because attributing every painful decision to AI obscures agency. It lets leaders off the hook and fosters fatalism instead of responsibility.

We Haven’t Lost…We Haven’t Really Even Started

Despite the volume of defeatist narratives, one fact remains stubbornly true: We have not lost.

In many respects, we have barely begun.

AI is exceptionally good at discrete tasks. It is far less capable at generating meaning, context, and purpose.

But organizations are not merely collections of tasks; they are dynamic systems of human interaction, judgment, and synthesis.

We often forget this when debates fixate on task replacement instead of organizational creation.

Human collaboration produces something more than efficiency. It produces culture, insight, and momentum.

Anyone who has spent time inside a healthy organization recognizes the unmistakable hum—a thousand micro‑interactions unfolding in real time, creating outcomes no single participant could design alone.

“Progress emerges in the space between human beings, through synthesis rather than extraction. That is not something we expect AI to replace.”

AI does not care about your brand, your history, or your values. Even if it could simulate concern, care itself cannot be automated.

Philosopher Hans‑Georg Gadamer described understanding as a “fusion of horizons”—meaning emerges through dialogue between people shaped by different contexts, assumptions, and experiences. Each interaction subtly reshapes those horizons. No conversation can ever be repeated in precisely the same way.

Meaning, in other words, is co‑produced. There are no finished solutions waiting to be discovered.

Progress emerges in the space between human beings, through synthesis rather than extraction. That is not something we expect AI to replace.

Redefining Value Is the Real Opportunity

At Excelerate, we often talk about profit as an engine of choice.

Profit enables imagination and realization, and it allows organizations to build things that matter to the people inside them.

Every culture embeds its values in its economy.

Ancient Egypt mobilized extraordinary resources to build monuments for the dead because it believed the afterlife mattered profoundly.

What societies build at scale reveals what they truly value.

Today, we invest trillions in financial systems, digital infrastructure, defense, healthcare, and consumer platforms. We measure the success of those investments largely through GDP.

GDP is useful but incomplete. It captures economic output, not human flourishing. It does not account for inequality, environmental degradation, unpaid labor, or quality of life.

When future historians sift through our records and our refuse, GDP will not explain why we chose certain paths over others. They will infer our values from what we built and tolerated at scale.

Profit remains an engine of choice. The question is what choices we want to make next.

What happens if we stop clinging to the familiar simply because it feels safe? What if we allow ourselves to imagine different definitions of value—ones that reflect what we actually care about rather than what we have historically measured?

No, we are not approaching Linus-level precious naiveite, we aren’t saying we should replace GDP with GDH (Gross Domestic Hugs).

But there is no shame in being hopeful. Hope is not naïveté but rather a prerequisite for agency.

Getting Off the Sidelines

Fear, combined with speed, produces withdrawal. People check out and let the future happen to them rather than participating in its construction.

That is the real risk of this moment—not AI itself, but collective disengagement.

We are approaching a convergence of capabilities that could dramatically reduce disease, expand access to knowledge, and rebalance power. But that potential will not realize itself automatically—it requires participation.

“AI is still learning what the world is and what we want it to become.”

Last year, we wrote in this very blog that no one should assume others are handling AI on their behalf. That remains true. Decisions about AI—how it is deployed, governed, and integrated—are being made now. They will shape professional lives and organizational trajectories whether people opt in or not.

This moment calls for something both simpler and harder than prediction: engagement.

Nobody is powerless and there is nothing collectively more powerful than human ingenuity applied with intention.

None of this guarantees clean outcomes, of course. But disengagement guarantees something worse: a future built without the benefit of human judgment, intention, and values.

Despite the noise, the pace, and the fear, this moment remains unusually open. AI is still learning what the world is and what we want it to become.

The question is whether we participate in teaching it.

A Call to Action: Practical Ways to Participate

If disengagement is the real risk of this moment, then the response is a quiet, sustained, and serious participation.

In that spirit, we are concluding this piece not with an ah-ha insight but instead with practical advice for people to participate.

Here are concrete ways leaders, operators, and everyday people can get back in the game.

Build AI’s understanding intentionally

Every interaction with AI systems is a form of training, formal or informal. One of the most direct ways to influence the future these systems help shape is to be deliberate about what you feed them.

Explain context instead of issuing commands. Share how decisions are actually made in your organization. Surface edge cases, tradeoffs, values, constraints. When AI systems encounter the real texture of human work (beautifully messy, contextual, and values‑laden) they become less flattened caricatures of it.

If AI is going to mediate more decisions, then it matters what it learns about how we think.

Treat AI experiments as organizational learning rather than efficiency plays

Run small, bounded experiments framed as learning exercises, not headcount reduction initiatives. Ask what improves judgment, coordination, or insight instead of what removes people fastest.

Document not just outcomes, but surprises. Where did human nuance still matter? Where did AI accelerate understanding? Where did it distort it?

What you learn here compounds. So then go tell AI about it (see #1).

Reconsider what you value and what constitutes ‘original’

Do not waste time trying to sniff out of if somebody’s work was produced with AI. Evaluate the quality of the output, not what went into it.

We’ve long accepted templates as an efficient resource, why treat AI-assisted outputs any differently?

Invest in synthesis roles, not just technical ones

As AI handles more discrete tasks, the value of the expert eye, of people who connect dots, increases. This includes product leaders, translators between technical and non‑technical teams, culture carriers, and judgment‑holders.

Do not starve these roles because they are hard to measure. They are how meaning, strategy, and coherence emerge.

Stay in the conversation, especially when it’s uncomfortable

Avoiding AI discussions does not make their consequences disappear. Participate in governance conversations, contribute to policy, and engage skeptically without retreating into cynicism.

Resist the urge to outsource responsibility to the future

The future is not something that happens later to other people. It is being assembled now through ordinary decisions about what to build, what to cut, what to measure, what to tolerate.

Opting out is still a choice, just not a tenable one.

Participation is how we shape what comes next.